Structuring Collaboration for Systems Innovation

I have found that most organizations are reasonably good at starting cross-boundary work.

They hold the kick-off. They sign the MOU. They stand up the working group and agree on the terms of reference.

Then six months later, the energy has drained out, partners are meeting less frequently, and no one can quite explain what happened.

What likely happened is that launching collaboration, practicing it and sustaining it are three different organizational capabilities. Institutions tend to build the first one and assume the second will follow. Starting the work draws on capabilities that public institutions have developed over decades: the authority to convene, the legitimacy to set agendas, and the operational machinery to produce documents and hold meetings. Practicing genuine collaboration across boundaries requires a different set of capabilities: conflict navigation, distributed credit, and the patience to hold ambiguity over years. Sustaining collaboration demands institutional support in resourcing and governance that falls outside the norm. Senior leadership generally has the first set. The second set lives further down in and outside the organization. The third is rare.

What do those people actually look like? They are not programme leads. They are the mid-level coordinators who maintain relationships between formal meetings, the team members who absorb friction before it reaches governance tables, and the practitioners who keep translating between institutional logics that never quite speak the same language.

In research I conducted with the International Social Security Association (ISSA) on collaborative innovation in public institutions, I developed a blueprint for the full arc of cross-boundary collaboration. The blueprint has nine components organized into three dimensions: the conditions that must exist before collaboration can succeed, the practice of collaboration itself, and the architecture that enables results to compound over time. The components map to where I most often saw collaborative initiatives lose ground.

These dimensions overlap. Building conditions and doing the work happen simultaneously, often under pressure, often in the same project. The framework works as a diagnostic. It surfaces what is present and what is missing. As conditions shift, the component that looks solid in month one often quietly erodes by month six.

Building the conditions before you need them

The first section covers what must exist before meaningful collaboration can occur.

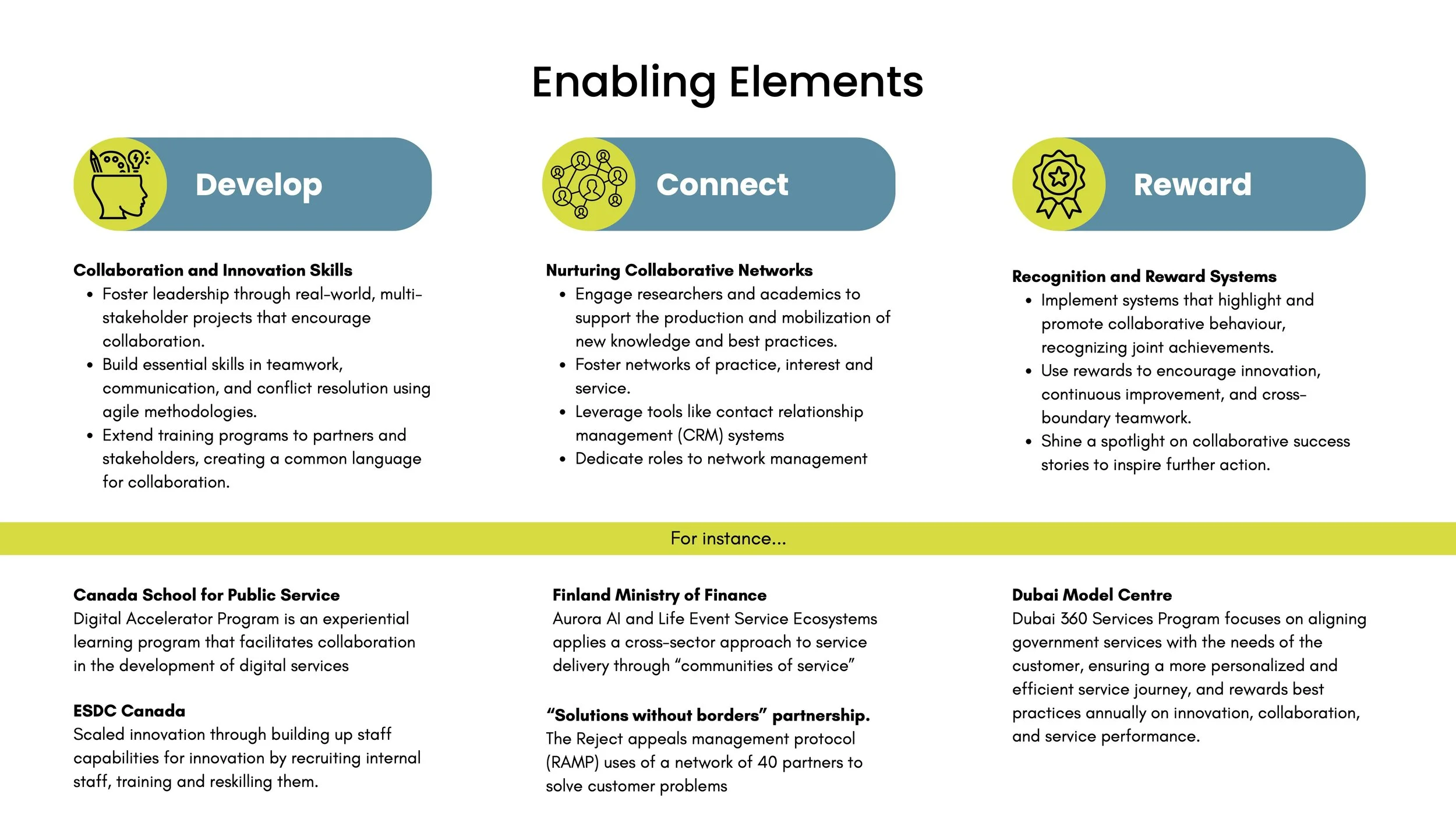

Develop means building the leadership and team skills that cross-boundary work requires: facilitation, conflict navigation, agile decision-making, and the ability to work with people whose institutional logic differs from your own. These skills are commonly treated as nice-to-haves. Institutions that collaborate well treat them as prerequisites.

Connect means building and sustaining the networks that collaboration draws from. These networks include formal partnerships, communities of practice, knowledge networks, and the staff-level relationships across organizations that make things move when they need to. Formal agreements travel slowly. Relationships move at the speed of trust.

Reward means creating incentives that make collaborative behaviour worth doing. This is the component I find most honest to name and most difficult to act on. If performance systems, recognition structures, and resource allocation logic all reinforce working within your own mandate, people will work within their own mandate, regardless of what the strategy says.

Reward is the signal that tells the organization what it actually values. For leaders who sit below where performance architecture gets designed, this can feel out of reach. The reality is more nuanced. Recognition practices, how results are attributed, and which work gets resourced: these sit within reach at multiple levels.

Making the case upward usually requires naming the cost directly: here is what the current incentive structure is producing, who is carrying it, and what we risk losing when they stop. Framing this as a retention and sustainability problem rather than a culture problem might resonate more with senior decision-makers.

That argument carries no guarantee. There are institutions where leaders made the case clearly, and the performance architecture stayed in place. When that happens, the most useful move is to make the misalignment legible: document it, name it in governance conversations, and build a record of what it is costing. Not to win the argument immediately, but so that when it is revisited, the evidence is already there. Where the conversation stalls, demonstrate alignment in what you do control: recognize collaborative behaviour publicly, attribute shared results to shared teams, and name the gap plainly when it produces visible cost.

This is harder in systems where no single actor owns performance design. The logic still holds, but the target shifts. In those contexts, the work is less about persuading a single decision-maker and more about making the misalignment visible across multiple points of authority simultaneously, until the cost becomes harder to ignore than the change itself.

Doing the work

The second section covers the practice of collaborative innovation itself. The techniques in this phase are familiar to anyone who has done facilitation or systems work. What makes them hard is the political reality the technique runs into: power gaps between partners, contested ownership of the problem, and the gravitational pull of premature solutions.

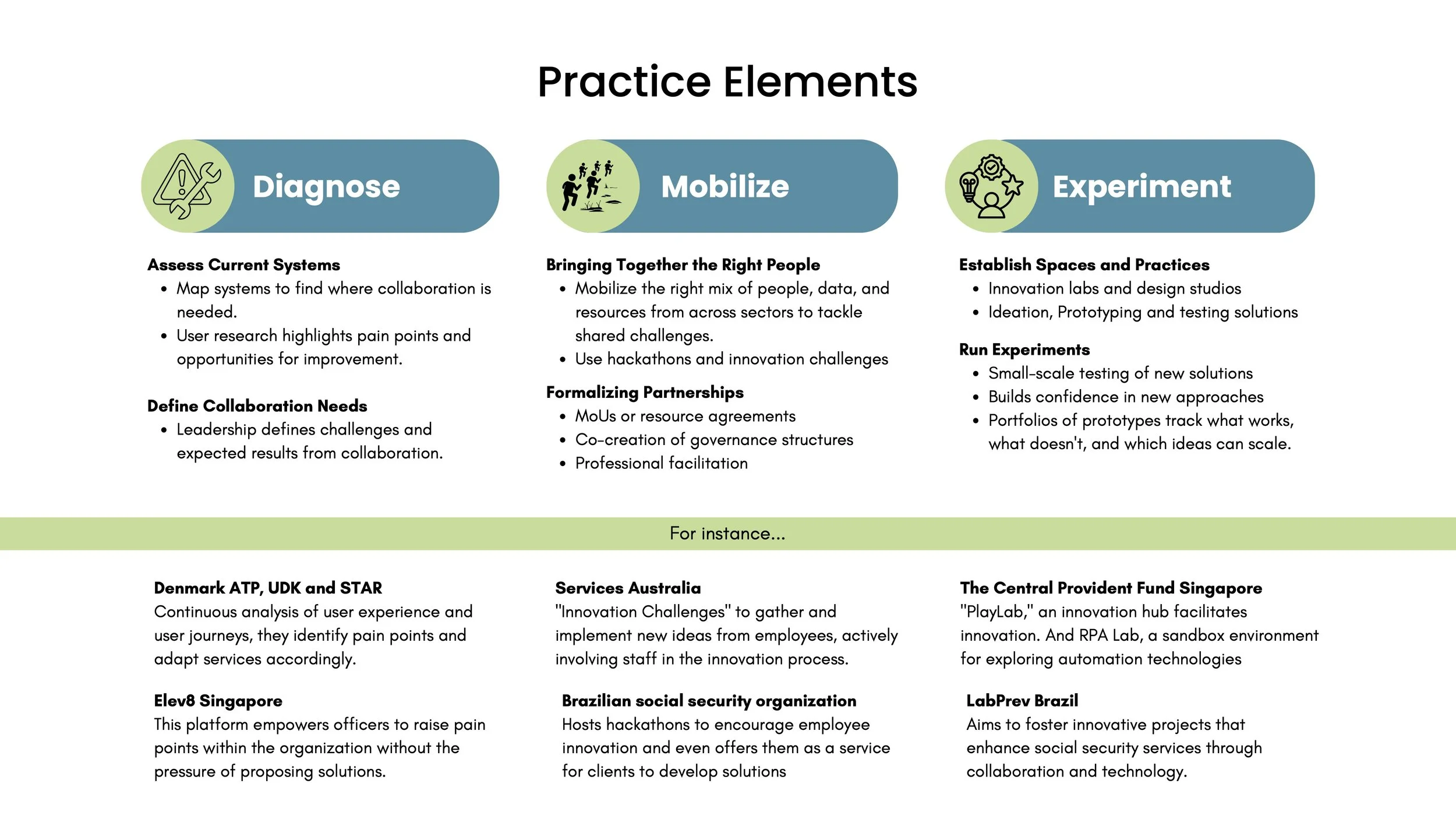

Diagnose means understanding a problem well enough to know what kind of collaboration it actually requires. This means mapping systems, conducting real user research, and building a shared problem frame across organizations before anyone proposes solutions. Premature alignment on solutions that address the wrong problem is a common and costly failure mode in cross-sector work.

Mobilize means bringing people, knowledge, and resources into contact with the problem. This includes hosting the kick-off, developing governance agreements collaboratively with partners rather than presenting them for signature, and engaging facilitators who can hold the container when organizational tensions surface. Beneath all of these techniques sits the harder question of power.

Power asymmetries show up fastest here. When one partner controls the resource envelope, collaborative governance can quietly become a form of ratification of decisions already made. What actually holds: naming the power differential explicitly at the outset, and building specific decision rights into the governance design before the first real conflict surfaces.

Four things need to be written down before that conflict arrives. Which decisions require consensus. Which partner has final say over what. What the escalation path looks like when there is disagreement. How smaller partners can raise concerns without confronting the larger partner directly. Organizations that left these implicit found that the dominant partner's preferences became the default, often along the path of least resistance with no bad faith required.

Who holds this when the power gap is significant? The most effective intervention I have seen is a facilitator or governance lead — someone without a stake in the resource relationship — who names the gap and holds space for it to be addressed before the collaboration moves into execution. That person needs to be commissioned deliberately, by someone who recognizes that the dominant partner will not typically create the conditions for their own power to be named. When no one commissions that function, it tends not to happen.

Experiment means creating conditions to test ideas before scaling them. Dedicated innovation spaces, cross-disciplinary teams, iterative prototyping with actual users. The organizations I studied that did this well maintained a portfolio of experiments rather than betting on a single solution.

Making it last

Most frameworks I have studied give this section less attention than the first two.

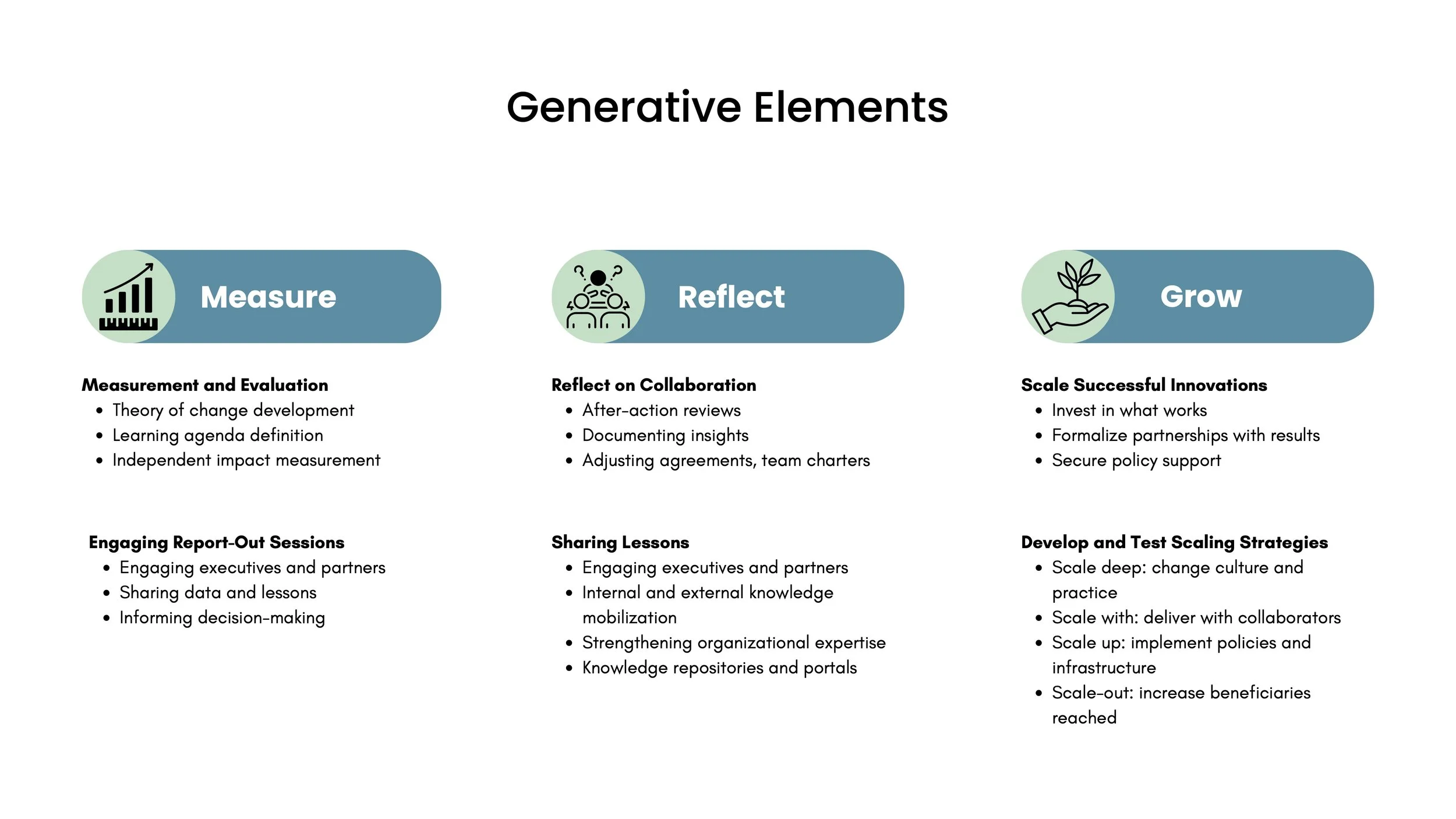

Measure means building a theory of change collaboratively and tracking against it. The point is learning what is working and why; activity counts are a byproduct. Organizations that sustained collaborative innovation over time used measurement to maintain alignment between partners and to make the case for continued investment. They designed reporting as a leadership engagement tool.

Reflect deserves more than it usually gets. In most collaborations I have seen, reflection is treated as debrief: a conversation at the end, when people are tired and already thinking about what comes next. What actually produces institutional learning is treating reflection as infrastructure: scheduled, resourced, and designed to produce outputs that travel.

But reflection also has a political dimension that most frameworks skip past. Structured reflection surfaces uncomfortable truths. Most institutions do not actually want that named in a document that circulates. The result is reflection that is honest in conversation and sanitized in writing, which means the learning does not travel.

What makes honest reflection safe enough to be real? A few things, in practice. Outputs that are designed for internal learning first, not external reporting. A facilitator with no stake in the narrative. Ground rules agreed before the session, not after the discomfort surfaces. And sponsorship from someone senior enough that naming failure is not career-limiting for the people in the room. Without that governance design, structured reflection produces structured amnesia. After-action reviews are a start, but only if the conditions exist for them to be honest. Grow means scaling what demonstrates results. For this, I borrow the distinction between scaling up, out and deep from Darcy Riddell and Michele-Lee Moore.

Scaling deep means embedding new practices into culture, so the new way of working becomes the normal way of working. Scaling out means expanding reach to more people and places. Scaling up means securing the policy and resource commitments that make expanded implementation possible. Each requires a different set of moves and a different set of relationships.

When capacity is limited, as it almost always is, the choice of mode carries weight. If the practice is fragile and not yet embedded, consider going deep first. Scale too fast from an unstable base and the expansion hollows out the original. If the model is proven and the need is urgent, consider going out. If the structural barrier is the binding constraint, you need to go up.

When partners disagree about whether the model is proven enough to scale out, that disagreement is itself diagnostic. It usually means the evidence is genuinely ambiguous, or that partners are operating with different theories of what counts as proof. Neither can be resolved by going faster. The right move is to surface the disagreement explicitly, agree on what evidence would change the assessment, and design a short period of deliberate testing before committing to a direction. Deferring that conversation is how capable teams destroy good pilots.

Organizations tend to default to whichever mode feels most familiar or most visible to leadership. That default is worth examining, because the Grow phase is also where earlier gaps catch up with you. A collaboration that carried unresolved incentive misalignment from Reward will find that the people who built it drop out before the expansion arrives. A collaboration that left governance implicit in Mobilize will find that expansion surfaces the conflict it deferred.

What the blueprint offers is a way to see the whole arc, and to notice in real time where ground is quietly being lost. These transitions between phases are where I have seen the most attrition. The infrastructure that was barely sufficient at a smaller scale fails at a larger one. The capabilities that got the work started fall short of what the work now requires.

This is the launching-versus-sustaining problem at every scale. The institutions whose cross-boundary work lasts are the ones that build sustaining capability while there is still time to build it well, before the energy has drained and the visible cost arrives. The question worth sitting with: which parts of this does your organization actually have, and which ones is it assuming will take care of themselves?

You can read the paper at https://www.issa.int/node/264386